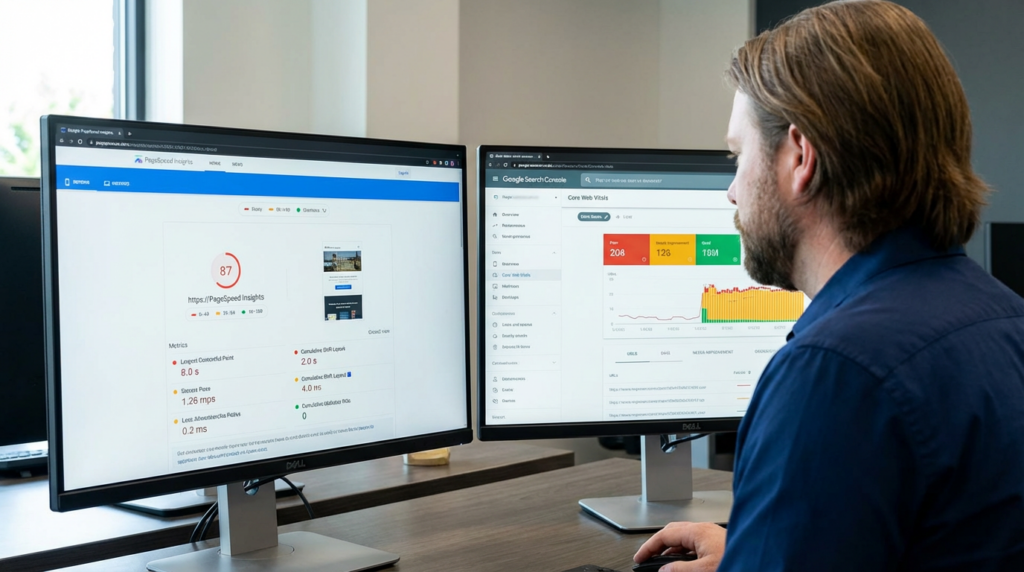

I recently had a prospective client send me a screenshot of their Google PageSpeed Insights report. It was a perfect 98/100 on mobile. They were thrilled. They thought they had solved their site speed issues.

I then pulled up their actual user data in the Chrome User Experience Report (CrUX). Their real-world Interaction to Next Paint (INP) was over 500ms—painfully slow. Users were clicking “Add to Cart,” waiting half a second for a response, and then abandoning the session in frustration.

The “Green” score was a lab simulation. The user frustration was reality.

If you are a Marketing Director chasing a perfect score on a testing tool while ignoring how your actual customers experience your site, you are optimizing for a vanity metric, not for revenue. This is one of the most dangerous misconceptions in Technical SEO today.

Speed is a Revenue Metric, Not a Vanity Metric

Let’s be clear about what is at risk here. We are not trying to impress a Google bot; we are trying to facilitate a transaction.

In the world of E-commerce Growth, milliseconds equal dollars. Amazon famously calculated that a one-second slowdown could cost them $1.6 billion in sales per year. For the mid-market e-commerce sites I work with, the ratio holds: latency kills conversion rates.

If your PageSpeed score is green because you delayed all your JavaScript execution until after the initial load, you might trick the tool. But when a real user tries to open your menu or filter a product list and the interface is frozen because the browser is suddenly busy processing that delayed code, the user experience is broken.

You are effectively trading a good test score for a bad customer experience. That is a losing Digital Strategy.

Lab Data vs. Field Data

To understand why the score lies, you have to understand the difference between Lab Data and Field Data.

Lab Data (what PageSpeed Insights largely shows you in the top section) is a simulation. It is a single test, run on a single device, with a specific network speed, from a specific location. It is useful for debugging, but it is not the real world.

Field Data (Core Web Vitals) is aggregated data from real users browsing your site via Chrome. This is the source of truth.

Here is how I audit this discrepancy for my clients using a forensic approach:

1. Analyzing the “Total Blocking Time” (TBT)

A site can load visually (giving you a good LCP score) but still be unresponsive. This happens when the main thread is blocked by heavy JavaScript tasks.

- I use the Performance tab in Chrome DevTools to profile the runtime.

- I look for “Long Tasks” (anything over 50ms).

- If I see third-party trackers, chat widgets, or poorly optimized Tag Management firing all at once, I know the user is clicking on a dead screen.

2. The GTM Bloat

I frequently see Google Tag Manager containers that have become digital landfills. Marketing teams add pixels for every new ad platform—Facebook, TikTok, LinkedIn, Hotjar, Criteo—and never remove them.

- Each of these tags downloads code.

- Each execution eats up CPU cycles on your customer’s mobile device.

- I audit the container to pause or remove legacy tags. If a tag hasn’t fired in 6 months, it is dead weight.

3. Real User Monitoring (RUM) in GA4

I do not trust the aggregated CrUX report alone because it is a rolling 28-day average. It is too slow to react to code pushes.

- I configure custom dimensions in GA4 to capture web vitals for individual sessions.

- This allows me to correlate speed metrics directly with ROI. I can show you a report that says: “Sessions with an LCP under 2.5 seconds have a conversion rate of 3%, while sessions over 4 seconds convert at 0.5%.”

- That is data you can take to your CFO.

Context Over Checklists

The industry is obsessed with “Best Practices.” They want a checklist that says “Do X, Y, and Z, and you will rank #1.”

I don’t believe in checklists. I believe in context.

A headless Magento site with a React frontend faces completely different challenges than a Shopify template. “Lazy loading images” is a best practice, right? Not if you lazy load your LCP (Largest Contentful Paint) image above the fold. I see this constantly—developers applying a standard optimization blindly, which actually hurts the user experience by making the hero banner pop in late.

My philosophy as a Digital Architect is that Data Integrity comes first. We don’t optimize for a score; we optimize for the specific constraints of your infrastructure and your user base.

I am based in Boise, Idaho, and I often tell my clients that I approach their website the same way a structural engineer approaches a building. I don’t care if the paint looks nice if the load-bearing walls are cracking. We fix the structure first.

Conclusion

A green 100/100 score is nice to frame on the wall, but it doesn’t deposit money in the bank.

If you are seeing a disconnect between your reported speed scores and your actual conversion rates, or if you suspect your “optimizations” are actually hurting usability, you need a forensic audit, not another automated report.

You need to look at the field data, clean up your JavaScript execution, and prioritize the metrics that actually impact the user’s ability to buy.

Would you like me to run a Core Web Vitals audit comparing your Lab Data against your Real User Metrics to see if your score is hiding a conversion killer?