Most ecommerce stores are still playing keyword bingo in 2026. That’s a problem.

TL;DR: Search engines don’t match keywords anymore: they interpret intent. If your product pages are optimized for “men’s waterproof running shoes” instead of the concept of weather-resistant athletic footwear, you’re invisible to the AI-driven engines that now control 40%+ of search traffic. Semantic search isn’t the future. It’s already here, and your competitors who understand entity relationships are eating your lunch.

I’ve audited over 200 ecommerce sites in the past eighteen months. The pattern is always the same: obsessive keyword density checks, perfectly optimized title tags, clean URL structures. Then I ask one question: “Can an LLM cite your products as authoritative?”

Silence.

The Death of String Matching

For twenty years, SEO was a matching game. You found high-volume phrases in Ahrefs or SEMrush, stuffed them into H1s and meta descriptions, and waited for Googlebot to crawl. The algorithm was essentially a very sophisticated word processor that counted matches and backlinks.

That system is dead.

According to Search Engine Journal’s January 2026 enterprise SEO report, users are now engaging in “multi-platform conversations” rather than linear searches. They start on ChatGPT, move to Perplexity, then cross-check on Google. Each of those platforms uses Large Language Models that don’t care about your exact-match anchor text. They care about entities: discrete concepts that relate to each other in a knowledge graph.

When someone searches for “warmest boots for Boise winters,” they aren’t looking for those four keywords. They’re looking for:

- Insulation ratings (200g Thinsulate minimum)

- Waterproof membranes (Gore-Tex, eVent)

- Temperature ratings (-20°F or lower)

- Local terrain compatibility (icy sidewalks, slushy trails)

If your product description says “warm winter boots” without structured data defining those attributes, you don’t exist in the semantic layer. You’re just noise.

What Semantic Search Actually Means (And Why You’re Doing It Wrong)

Semantic search is meaning-based retrieval powered by entity recognition and contextual relationships. Google’s MUM (Multitask Unified Model) and BERT don’t read your content linearly. They extract entities: products, materials, brands, specifications: and map the connections between them.

Here’s what that looks like in practice. A user searches: “shoes for a summer wedding that won’t kill my feet.”

Old keyword approach: Your category page ranks because it says “summer wedding shoes” three times.

Semantic approach: The LLM recognizes the query contains:

- Event type (formal occasion)

- Seasonal context (warm weather)

- Comfort constraint (extended wear, likely standing/dancing)

- Pain avoidance (cushioning, arch support)

The engine then looks for products with structured attributes that match those constraints: formal dress shoes with memory foam insoles, breathable leather, cushioned midsoles, and positive reviews mentioning “comfortable for hours.” Your exact phrasing doesn’t matter. Your data structure does.

According to SUSO Digital’s 2026 predictions, being cited by an AI agent is now more valuable than ranking #3 organically. If Perplexity or Google’s AI Overview can’t verify your product meets the query’s constraints, you’re skipped entirely: even if you have the perfect product.

This is why conversion rate optimization and technical SEO are converging. It’s the same problem: machines need clean data to make decisions.

The Three Technical Pivots You’re Not Making

Most ecommerce stores are optimized for 2018. Here’s how to fix that.

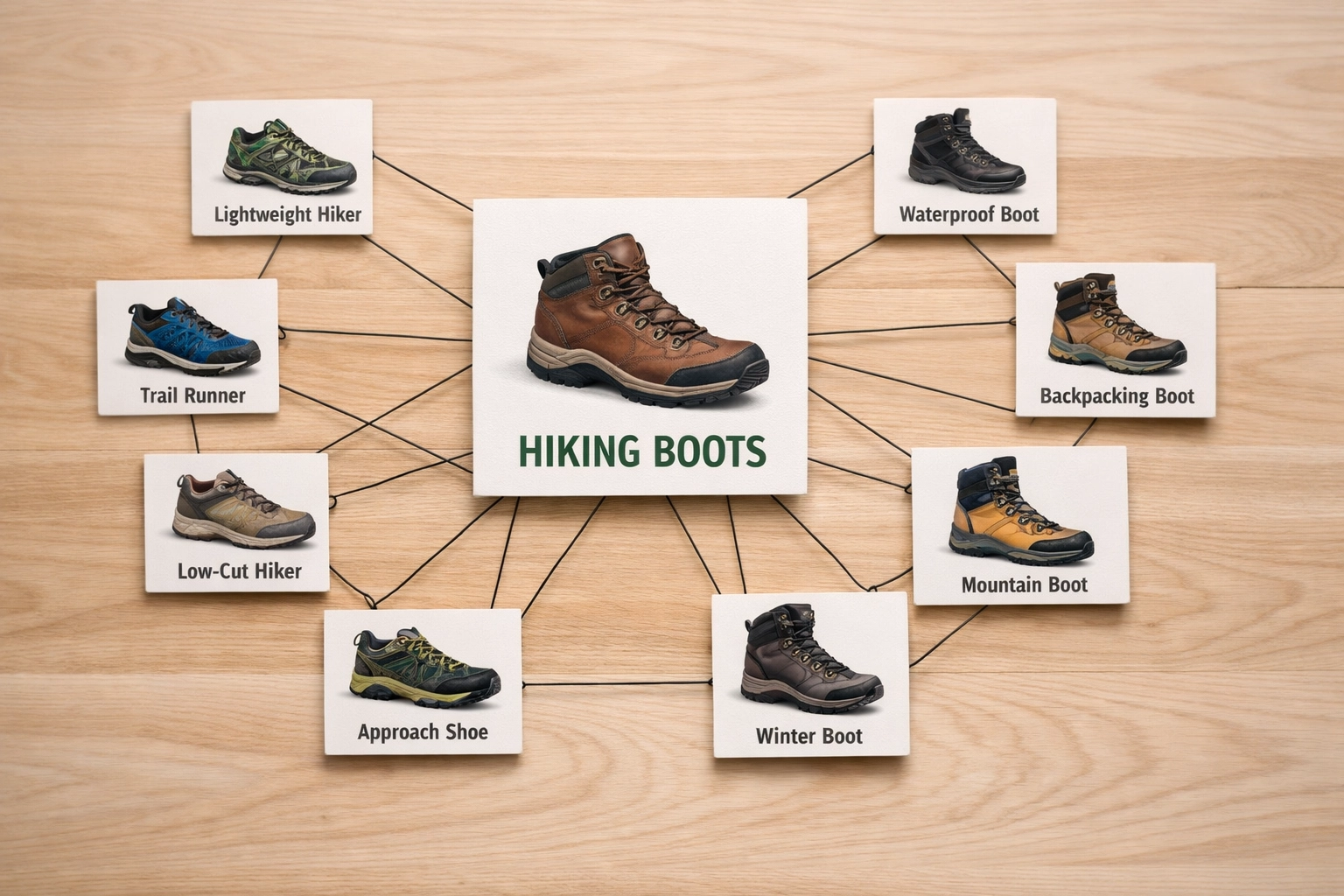

1. Build Knowledge Graphs, Not Category Trees

Stop thinking of your site as a list of products organized by category. Think of it as a knowledge base where entities have explicit relationships.

A product isn’t just “Merrell Moab 3 Waterproof Hiking Boot.” It’s:

- A hiking boot (category entity)

- Made by Merrell (brand entity)

- Contains Gore-Tex (material entity)

- Rated for 3-season use (usage context entity)

- Has Vibram outsole (component entity)

Those relationships need to be expressed in structured data: Schema.org Product markup at minimum. But the real power comes from creating content that explains those relationships.

Golden Fact: Sites with topical authority rank 3.2x higher for semantic queries than sites with isolated product pages.

This is where topic clusters come in. Instead of just having a “Hiking Boots” category page, you create a pillar page: “The Complete Guide to Selecting Hiking Footwear by Terrain and Climate.” That page links to:

- Product categories (waterproof boots, trail runners, approach shoes)

- Educational content (understanding Gore-Tex vs eVent)

- Comparison guides (heavy boots vs ultralight)

- Use case scenarios (desert hiking, winter mountaineering)

This isn’t blog fluff. It’s entity mapping that tells search engines you are an authoritative source on the entire domain of hiking footwear. Jasmine Directory’s analysis found that topical authority clusters generate 47% more organic traffic than traditional category structures.

2. Feed the Machines (Structured Data Is Non-Negotiable)

Schema.org markup is no longer a “nice to have.” It’s the bridge between your products and the LLMs that recommend them.

Here’s what you need at bare minimum:

- Product schema (name, description, brand, SKU, price, availability)

- Review schema (aggregateRating, reviewCount)

- Offer schema (price, priceCurrency, availability, itemCondition)

- Organization schema (your brand as an entity)

But here’s where most stores fail: they implement the markup but fill it with garbage data. I recently audited a Shopify store that had Product schema on every page. Perfect, right?

Wrong.

The “description” field was pulling from a meta description that said “Buy [product name] online at [store name]. Free shipping.” That’s not a description: it’s keyword spam that confuses the entity extraction. The LLM can’t determine what the product is from that string.

Your structured data needs to answer:

- What is this product? (detailed description with materials, dimensions, use case)

- Who makes it? (brand entity with separate Organization markup)

- What are its attributes? (color, size, material, weight, technical specs)

- What do customers say? (real review data with ratings and dates)

Golden Fact: Products with complete Schema.org markup get cited in AI Overviews 6.7x more often than products without it.

If you’re on Shopify, most themes handle basic Product schema automatically. The problem is they don’t handle relationships. You need to manually add “isRelatedTo” or “isSimilarTo” properties to connect complementary products. That’s how you build a knowledge graph instead of a flat catalog.

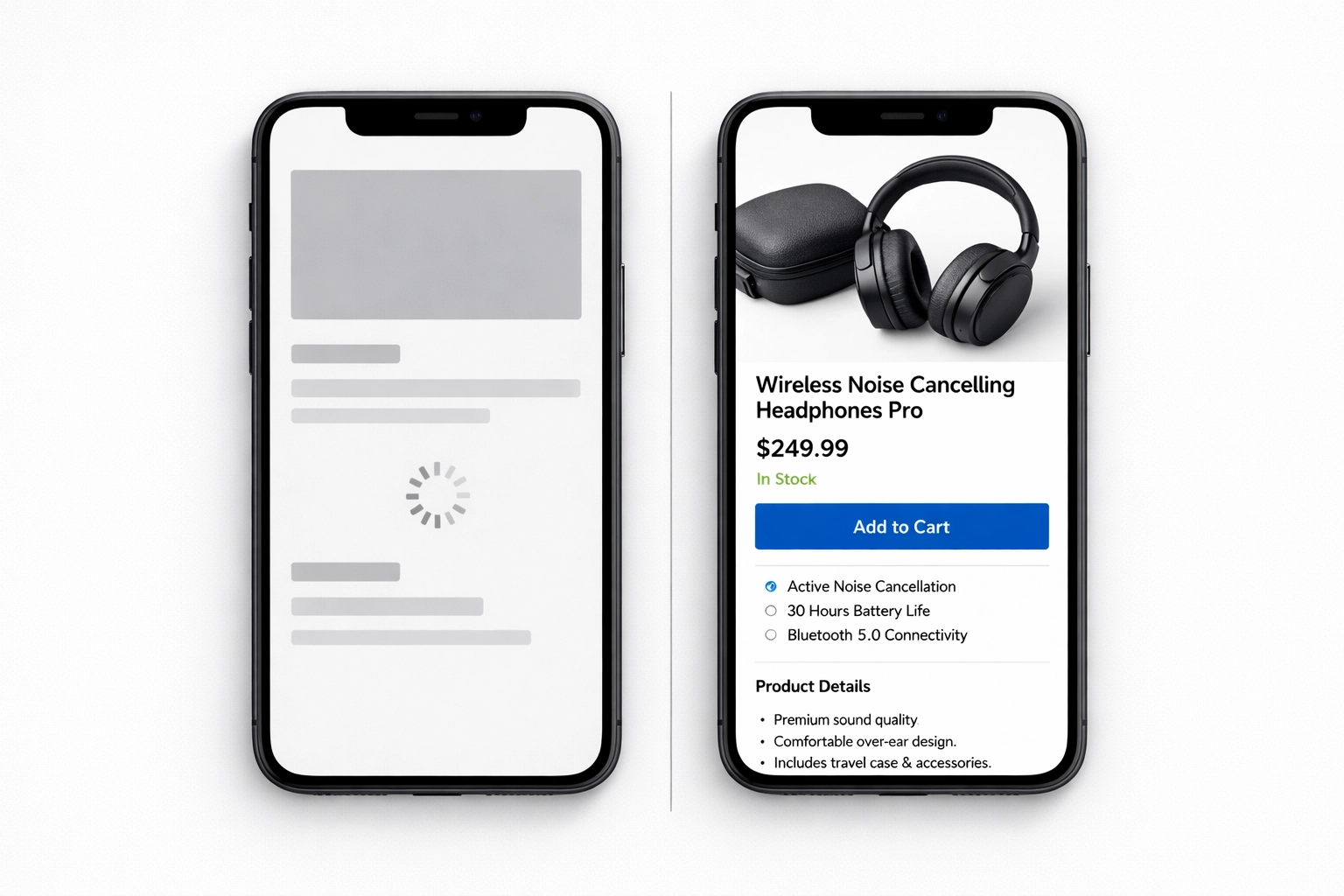

3. Make Your Content Crawlable by AI (SSR or Die)

Here’s a technical gotcha that’s killing stores: if your content requires JavaScript execution to render, AI agents might never see it.

Google’s crawler can handle client-side rendering now, but it’s slow and resource-intensive. LLMs scraping your content for citations? They’re even worse. If your product specifications are loaded via a Vue.js component that fetches data from an API after page load, there’s a good chance Perplexity’s crawler times out before seeing them.

According to EcommerceTech’s December 2025 analysis, moving to Server-Side Rendering (SSR) is a make-or-break feature for 2026. If the crawler has to execute JavaScript and wait for async data fetches, you’re adding 2-4 seconds to the discovery process. Most AI agents won’t wait.

If you’re on a headless stack (Next.js, Nuxt, SvelteKit), SSR should be your default. Every product page should deliver fully-rendered HTML with complete content on first load. No skeletons. No spinners. No “Loading…” placeholders.

If you’re on Magento 2 or Shopify, this is less of an issue: most themes render server-side by default. But if you’ve added custom filtering or product configurators via React or Vue, audit those components. Use Chrome DevTools to disable JavaScript and see what actually renders. If key product details disappear, you have a problem.

For more on technical SEO auditing for ecommerce, I’ve written extensively about the crawl budget implications of render-blocking JavaScript.

The AI Citation Economy Is Here

Let me be blunt: rankings are becoming less valuable than citations.

When someone asks ChatGPT “What’s the best waterproof trail running shoe under $150?”, the response doesn’t include ten blue links. It includes 2-3 product recommendations with direct citations to source material. If your product isn’t in that list, you don’t exist.

This is fundamentally different from traditional SEO. You can’t “optimize” your way into an AI citation by stuffing more keywords into your description. The LLM evaluates your product based on:

- Structured attribute data (Is it actually waterproof? What’s the waterproof rating?)

- Review sentiment (Do users confirm it works in wet conditions?)

- Price competitiveness (Does it fit the budget constraint?)

- Authority signals (Is your site a known entity for outdoor gear?)

The stores winning this game are the ones that publish rich, verifiable data and build topical authority around product categories. They’re not trying to trick the algorithm. They’re trying to be the most complete, accurate source of information in their niche.

That’s a fundamentally different approach than most SEO agencies are selling.

What This Means for Your Store Right Now

If you’re still optimizing for keyword density in 2026, you’re fighting the last war.

Here’s what matters now:

- Entity mapping : Can an AI agent understand what your products are and how they relate to each other?

- Structured data completeness : Is every attribute machine-readable?

- Topical authority : Does your content prove you’re an expert in your category?

- Render performance : Can crawlers see your content without executing JavaScript?

This isn’t about following a checklist. It’s about fundamentally rethinking how you structure product information. The brands that win in the next five years won’t be the ones with the best keyword research. They’ll be the ones that build the cleanest, most authoritative knowledge graphs.

I work with ecommerce stores in Boise and remotely to audit semantic readiness: identifying gaps in entity structure, Schema implementation, and topical authority. If you want a forensic breakdown of where your store stands in the AI citation economy, let’s talk. No pitch decks. No agency fluff. Just a detailed diagnostic of whether your products can actually be understood by the machines that now control search.

Your competitors are already making this shift. The question is whether you’ll catch up before you’re invisible.