I’m going to be blunt: if you’re still treating technical SEO like it’s just “the basics” that any junior dev can handle, you’re about to get steamrolled by AI-powered search, and you won’t even see it coming.

TL;DR: Technical SEO isn’t just more important in 2026, it’s the only thing standing between your site and total invisibility in AI-driven search results. If an LLM can’t crawl it, render it, or parse your structured data, you don’t exist in the answer box. Period.

The conversation around technical SEO has gotten soft. People treat it like a checkbox exercise, “Yeah, we fixed the robots.txt, we’re good.” Meanwhile, 72% of websites fail at least one critical technical SEO factor, and those sites are bleeding organic traffic they’ll never get back.

I’m writing this from Boise on a Thursday morning because I just finished an audit where a seven-figure ecommerce brand had been ignoring server-side rendering issues for eighteen months. Their product pages weren’t being indexed by Perplexity or Google’s SGE. They thought they had an “SEO problem.” They had a technical architecture problem.

The Shift Nobody Saw Coming: Keywords Are Dead, Entities Are Everything

Here’s what changed while you were obsessing over keyword density and meta descriptions.

Search engines, and more importantly, Large Language Models, don’t care about your keywords anymore. They care about entities. They care about structured, machine-readable data that tells them what your business is, what problems you solve, and how you relate to other entities in the knowledge graph.

Google’s not ranking web pages for “best CRM software” anymore, it’s ranking CRM software entities that have clean schema markup, clear relationships to use cases, and technically sound implementations. If your site can’t communicate these relationships in a way an AI can parse, you’re invisible.

This isn’t theoretical. When I run a site through Screaming Frog and see missing or broken structured data, I know exactly what’s happening: that site is being systematically deprioritized in AI-generated answers. ChatGPT won’t recommend you. Perplexity won’t surface you. SGE will skip right past you to a competitor who took schema seriously.

Golden Fact: Proper technical optimization increases organic traffic by an average of 30%, according to Semrush’s latest benchmark data. That’s not marginal, that’s the difference between profitability and irrelevance.

Why Server-Side Rendering Still Matters (Maybe More Than Ever)

Let me guess: someone told you that Google can “handle JavaScript now” so you don’t need to worry about rendering.

That person was wrong.

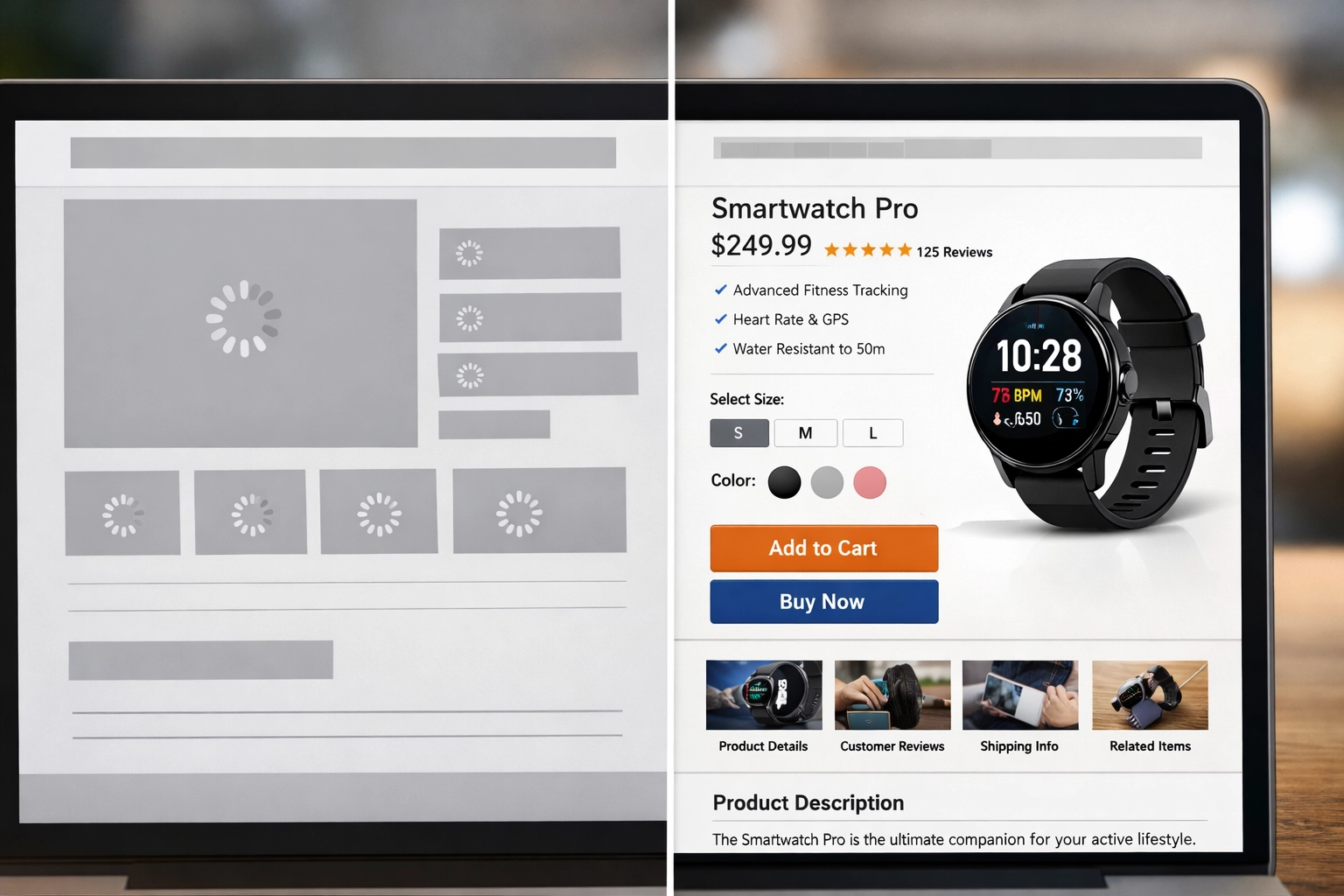

Yes, Googlebot can attempt to render JavaScript. But AI crawlers like those powering Perplexity, Claude, or ChatGPT’s web browsing? They’re hitting your site with different expectations. If your content requires client-side rendering, multiple API calls, and three seconds of JavaScript execution before anything meaningful loads, you’re creating friction that AI bots won’t tolerate.

Here’s what I see constantly:

- Headless commerce sites with beautiful React frontends that serve nothing but skeleton loaders to bots

- Dynamic filtering that creates crawl traps because the URLs aren’t properly canonicalized

- Lazy-loaded content that never materializes for non-browser crawlers

The solution isn’t “better JavaScript.” It’s server-side rendering or edge delivery with proper caching. When a bot hits your page, it should get fully-formed HTML with structured data already embedded. No waiting. No “hydration.” No excuses.

I recently worked with a Shopify Plus merchant who moved from a heavily JavaScript-dependent theme to a server-side rendered solution. Their indexation rate jumped 40% in six weeks. Why? Because Google and AI crawlers could actually see the content without burning compute cycles on rendering.

If you’re using WordPress, this is where something like WP Engine’s edge caching becomes non-negotiable. If you’re on Magento or a custom stack, you need to audit how your pages are being delivered to bots versus browsers.

Structured Data Is the Bridge Between Your Site and LLMs

Schema.org markup is no longer optional. It’s the only way AI systems can confidently extract and trust information from your site.

Think about how an LLM processes a web page. It’s not reading your flowery prose and “getting the vibe.” It’s looking for machine-readable signals:

- Organization schema to understand who you are

- Product schema to extract price, availability, and reviews

- FAQ schema to pull direct answers

- BreadcrumbList to understand site hierarchy

- LocalBusiness schema if you have physical locations

Without this, you’re forcing the AI to guess. And when AI guesses, it defaults to sources with cleaner data. Your competitor who implemented proper schema six months ago? They’re getting cited. You’re not.

Here’s the part that pisses me off: implementing schema is not hard. JSON-LD is straightforward. Google’s Structured Data Testing Tool tells you exactly what’s broken. Yet the majority of sites I audit either have no schema or have schema so poorly implemented that it’s worse than having none.

I’m not talking about advanced stuff here. I’m talking about basic Product, Article, and WebPage schema. If you’re an ecommerce site without Product schema on every SKU, you’re essentially invisible to AI-powered search optimization.

The Technical Debt Trap: How Messy Code Kills Modern Search

Let’s talk about what happens when you ignore technical SEO for a year or two.

You accumulate what I call technical debt: the compound interest of bad decisions. Broken redirects. Orphaned pages. Duplicate content that never got canonicalized. JavaScript errors that silently fail. Crawl budget waste on parameter URLs.

This stuff matters more in 2026 because Google’s crawl efficiency and indexing standards have become stricter. Sites with technical flaws are being ranked lower, faster than ever before.

What does technical debt look like in practice?

- Redirect chains that waste crawl budget and dilute link equity

- Missing or incorrect hreflang tags for international sites

- Unoptimized images that torpedo Core Web Vitals

- Mixed content warnings (HTTP resources on HTTPS pages)

- Unclosed tags and malformed HTML that confuse parsers

AI crawlers are even less forgiving than Google. They don’t have infinite patience. If your site is a technical mess, they move on to cleaner sources.

I recently audited a Magento 2 site with 14,000 products. They had 47 different redirect chains, some going five levels deep. Their crawl efficiency was abysmal. Fixing just the redirect architecture alone improved their indexation rate by 22%.

Golden Fact: Technical SEO is non-negotiable for ensuring your site is crawlable and indexable. Without this foundation, no amount of quality content will rank effectively.

The ‘AI Answer Box’ Problem You’re Not Thinking About

Here’s the uncomfortable truth: traditional “ranking” is becoming less relevant.

When someone asks ChatGPT or Perplexity a question, they’re not clicking through to position #3 in a SERP. They’re getting an AI-generated answer that either cites your site or doesn’t. Binary. All or nothing.

How does an LLM decide whether to cite you?

It looks at:

- Technical trustworthiness: Is your site fast, secure, and error-free?

- Structured data quality: Can the AI extract clean facts?

- Content freshness: Are you regularly updated, or is your last-modified date from 2019?

- Entity relationships: Are you connected to authoritative entities in the knowledge graph?

All of this is technical SEO. None of it is about “writing better content” or “building backlinks.” It’s about whether your site can be trusted by a machine.

I’m seeing this play out in real-time with clients. The ones who invested in technical foundations: proper schema, clean rendering, optimized performance: are getting cited in AI answers. The ones who ignored technical work are getting ghosted.

What This Means for You Right Now

If you’re running an ecommerce platform, a SaaS product site, or any business that relies on organic visibility, you need to stop thinking of technical SEO as “maintenance” and start thinking of it as competitive infrastructure.

The sites winning in 2026 are the ones that:

- Serve fully-rendered HTML to all crawlers

- Implement comprehensive structured data across every page type

- Maintain Core Web Vitals in the green

- Have zero critical technical errors (broken redirects, 404s, duplicate content)

- Use proper canonicalization and hreflang for multi-language or multi-region sites

This isn’t aspirational. This is table stakes for being discoverable in AI-powered search.

Forensic Summary

Look, I’m not here to sell you on SEO as a concept. You already know it matters. What I’m telling you is that the technical foundation of your site is now the primary ranking factor for AI-driven search: not secondary, not “nice to have,” but primary.

If you’re not sure where your site stands, that’s a problem you can solve. I run forensic technical audits that surface the exact issues preventing AI crawlers from trusting your site. No fluff, no generic recommendations: just the specific fixes that will move the needle.

Want to know what’s actually breaking your site’s AI discoverability? Let’s run a diagnostic.